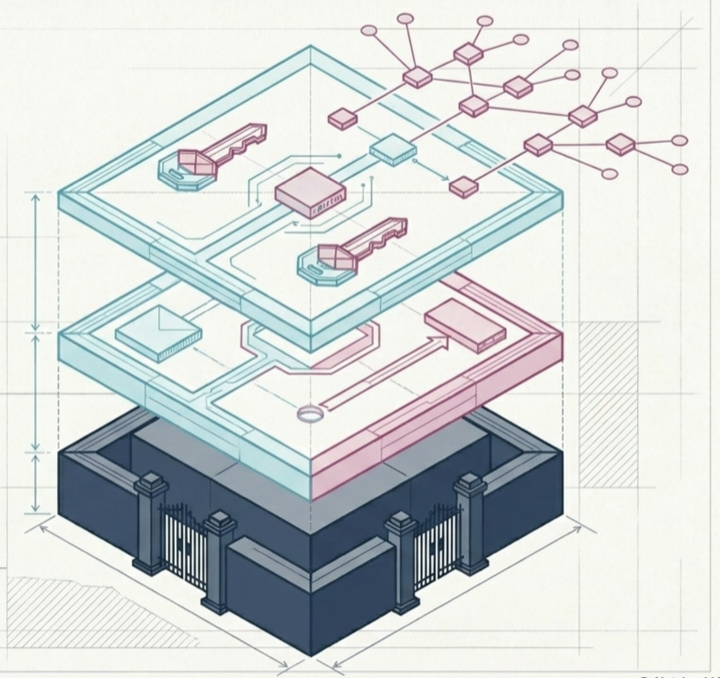

Every agent action passes through AI Agent Gateway.

A single enforcement point between agents and enterprise systems. Deployed inside your infrastructure. No agent changes required.

The agent asks. We check. The system responds.

No framework rewrites. No new SDK. An agent configured for MCP, REST, or HTTPS points at AI Agent Gateway and inherits the entire enforcement model.

POST /mcp/tools/call

{

"tool": "gmail.send",

"args": { ... },

"token": "st_a1b2c3..."

}

# AI Agent Gateway enforces

→ scope check

→ guardrail check

→ audit log

200 { "status": "sent" }